Best Practices to Writing Tests That Can Run in Parallel

This article will walk you through some of the best practices for parallel testing the experienced folks at Team Testery have learned (usually the hard way) in the last couple decades of getting large test suites to run at high levels of parallelization.

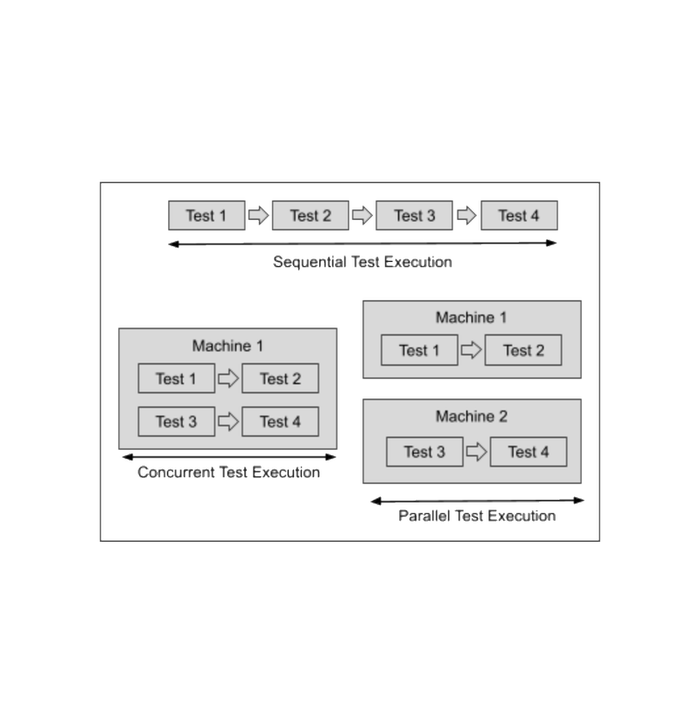

There are two main challenges in getting tests to run quickly and reliably in parallel. The first is getting the tests themselves to all run at the same time and report the results. Platforms like Testery make it simple to scale up as much as needed, but even if you don't need the scale of Testery you can still run multiple threads on a build agent to get faster test runs.

But the second big challenge is how to write the tests themselves so that they are able to run at the same time without impacting each other and producing flaky results.

This article will walk you through some of the best practices for parallel testing the experienced folks at Team Testery have learned (usually the hard way) in the last couple decades of getting large test suites to run at high levels of parallelization.

Be Careful with Static Code Blocks and Thread-safety

This isn't an issue with the Testery platform, because Testery (unlike a number of other parallelization options) runs each test independently. So Testery is able to run at extremely high levels of parallelism even if those tests use static properties.

But if you're using threads to run tests in parallel, you'll want to be extremely careful when using static properties, code blocks, etc. The various tests will be reading and modifying these and could easily collide with each other. You'll also need to make sure any data types you use are considered "thread-safe".

Writing code multi-threaded code properly can be challenging, especially when you start getting into synchronous blocks and share contexts. Why go there if you don't have to?

Write Tests That Can Run in Any Order

While it can be convenient to have tests run in a particular order, this will greatly limit your ability to parallelize the tests. It can be tempting to write create, update, and delete tests as three separate tests that expect to run in a certain order, but this will limit your ability to scale up your testing.

Use Separate Instances for Automation and Manual Testing

This isn't always an option due to cost. But if your system doesn't have a large footprint it can be helpful to run automation in its own instance. This gives you more control over the state of the system and can help with creating reliable test runs. Having folks manually test in the same instance (especially folks whose job is to try to break things) can leave the system in a less predictable state.

Don't Assume State in Your Tests

The best tests setup for themselves and teardown when they're done (even if they failed). Tests that assume a feature toggle is enabled, a record exists in the database, a user setting is on, etc. are setting themselves up for failure when manual testers or other tests leave the system in a different state.

Determine Your Test Isolation Strategy

Watch out for any functionality in your system-under-test that modifies the behavior for other sessions. Some examples are:

- New sessions return to last page visited

- Feature toggles that turn on/off functionality

- Creating and modifying data adjusts functionality

- Users can adjust user/account settings

Depending on the properties of your system and constraints you're dealing with, there are various strategies for creating test isolation.

Isolation by database transaction. With this approach, each test is a separate database transaction. At the end of the test, the database transaction is rolled back to its previous state, ensuring that tests are always starting from the same state. This can be great for reliability, but this isn't an option for many tests, especially end-to-end tests where database level access may not be possible.

Isolation by data naming conventions. Here test data is created using a convention to help avoid conflicts. For example, if you're testing that you can add a new car to a list of cars, the new car that gets added could be a "TEST123 FORD". Then when asserting that the record was created, you'd avoid looking for records that don't start with "TEST123".

Isolation by user. Sometimes there is functionality that will greatly alter the user experience. For example, there could be a user setting of "send emails" vs "send Slack messages". A test for sending emails may need it on while a test for sending Slack messages may need it off. In some systems, a good way to handle this is to have tests run within their own user account. If you are working in a system where users shouldn't be able to impact other users, this may be a good option.

Isolation by account/organization. In this approach, each test (or a suite of tests) runs as a different account. This can be useful for testing things like feature toggles that may be set at the account level.

Use Multi-phase Test Runs

In multi-phase test runs, test runs are split up into sections. The tests within each section can run in parallel but then the sections themselves are sequential. You may want to do this to run a database setup suite that must complete before the functionality suite begins. Or you may want to do this to run smoke tests before starting a full regression run.

Be Aware of Shared Resources

Anything that's a shared resource can be a potential source of conflict. The servers the tests run on, the servers the system-under-test run on, the database, caching layers, etc. Depending on your database, there may be transactions that are blocking — meaning they won't allow other transactions until they complete. This can be hugely detrimental to parallelizing test runs. Some caching layers are fantastic at being read-many-times but suffer greatly when multiple threads are writing to them. You may also be putting significant load on the system-under-test causing failures due to timeouts and performance issues.

We've even seen test runs hang and fail because a DBA had an open uncommitted transaction on the test database open in an editor and went to lunch.

Be Generous in Your Timeouts

Hopefully your tests are using implicit and explicit waits appropriately instead of relying on sleep commands. But in either case, there is some timeout and you should be aware of what that timeout is.

If you're testing for performance, then you want those hard limits there and you want to make sure they're respected. But when you're testing for functionality at high levels of parallelism, performance may be less important and you may want to increase those timeouts so that the tests are more reliable even when the test environments (which may not be as performant as production) are under load.

Hopefully this gives you a bit of a mental checklist to go through as you write your tests. Do you have anything else that should be on the list? Let us know!