Parallel Test Execution Guide

Running your tests in parallel when building software is a necessity if you want high quality and fast feedback loops. It is required to attain continuous delivery in a timely manner. Parallel test execution is a great way to reduce overall execution time making teams more efficient. Just keep in mind that for best results there should be a strategy in how to write tests that will perform well when running in parallel. Consider best practices like:

- Keep your tests small. Keeping tests small will help your suite run efficiently and provide you with faster results.

- Avoid dependencies between test runs.

- Ensure each test uses its own test data and doesn’t depend on data created from a previous test.

- Avoid using shared state across tests.

- Consider tagging scripts to more easily run groups of tests.

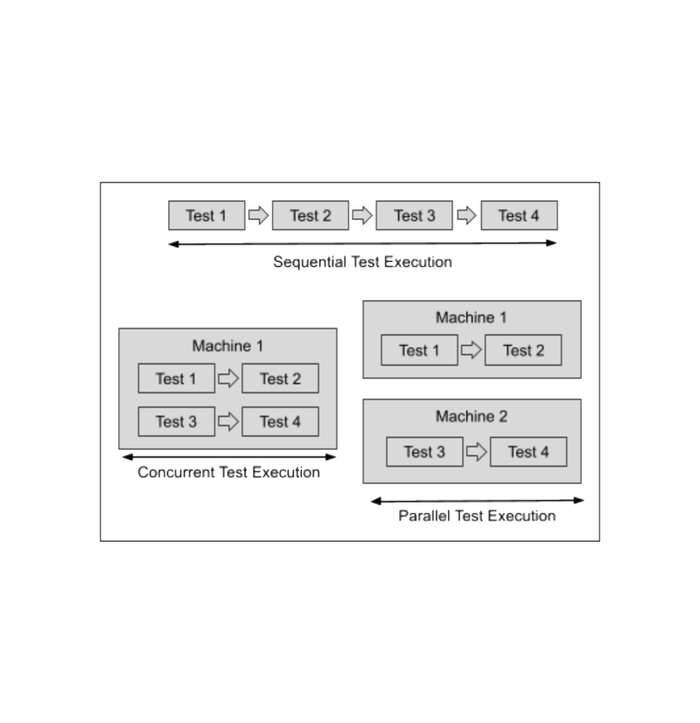

What is parallel testing?

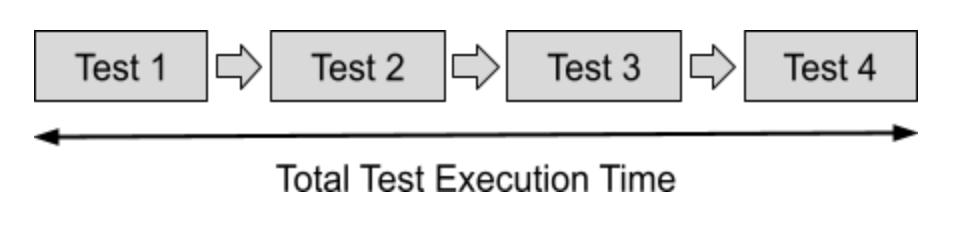

Parallel testing is running multiple tests at the same time in order to reduce the overall test execution time while testing an application across multiple machines. Parallel testing is harder to set up than sequential testing but so worth it. Here is the difference:

Sequential Test Execution

Parallel Test Execution

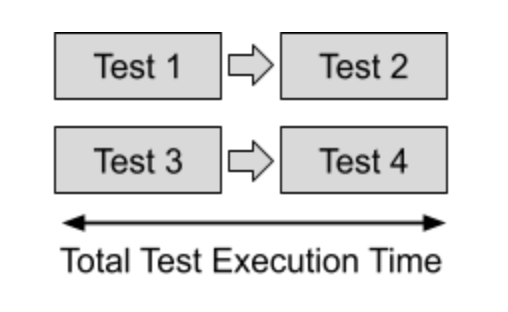

Parallel testing is also different from concurrent testing. Concurrent testing is similar, but there is an important difference. Concurrent testing is running multiple tests at the same time in order to reduce test execution time on the same machine. This difference is important to understand because you do not have unlimited concurrent tests because you are limited by the machine you are running on. With parallel testing you can spin up as many machines as needed to support your goals.

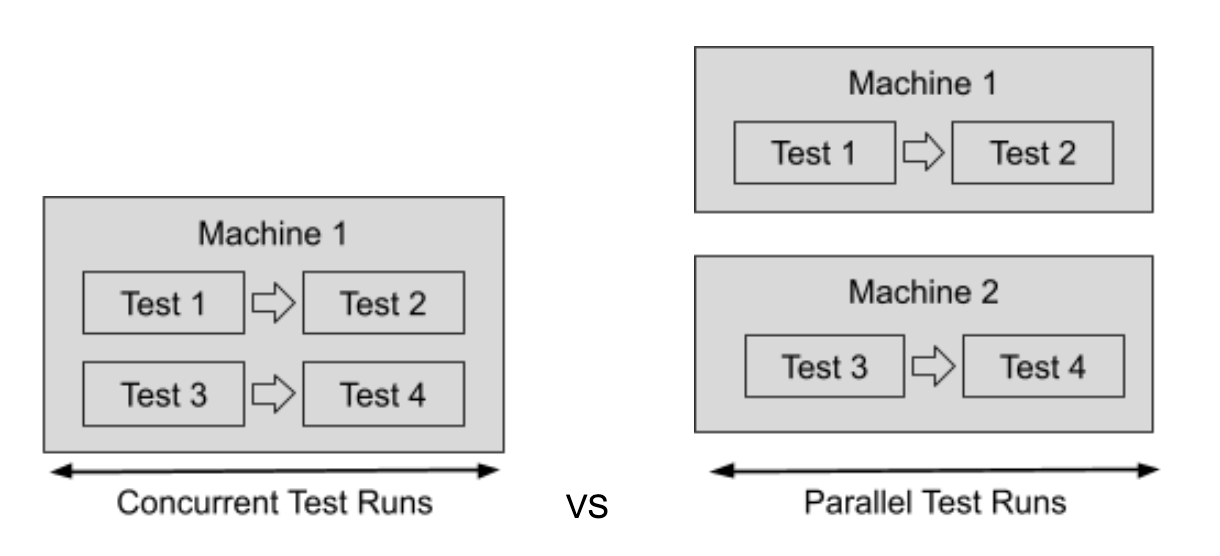

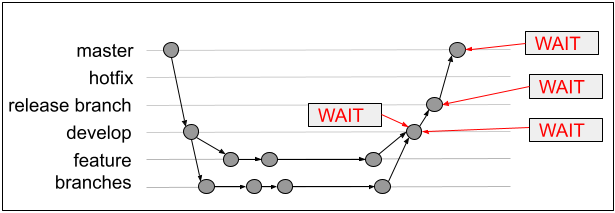

Let’s show an example of a development team and the effect parallel testing can have. Team Tiger uses a gitflow release strategy and has 2 developers working different features. Wait time happens innately every time tests have to be run. For this example, assume there are 100 tests that each take 1 minute to run = 100 minutes when running sequentially. Both developers are pushing their feature to develop separately and the 2 features get put into a release together.

If tests are run sequentially:

- Total wait time is 4 x 100 minutes = 400 minutes (3.6 hours)

- This would only give Team Tiger 1 deploy, maybe 2 deploys per day if the first deploy was early morning.

- Dev #2 waits 100 minutes for tests to complete from Dev #1 pushing to develop before he can push to develop. Even though this developer could in theory move on to another task, experience has shown that most likely he will be context switching between any new task and getting this feature into develop, thus reducing his efficiency.

If tests are run in parallel with 10 concurrent test runs:

- Total wait time is 4 x 10 minutes (100 / 10 parallel tests) = 40 minutes

- This would give Team Tiger the ability to do more than 10 deploys per day.

Result: running with 10 parallel tests made Team Tiger 10X more efficient giving them more chances to deploy and get feedback quicker.

Why parallelize tests?

The obvious reason to parallelize your tests is time. For instance, parallel testing and improving time will improve lead time, deployment frequency, change failure percentage, and even mean time to recovery if a team includes testing in that process. It also allows teams that have integrated tests into their CI/CD pipeline to execute in a timely manner to support continuous deployments.

Time is a big reason but not the only one. Parallel testing also improves the overall quality of an application by allowing teams to run more tests faster throughout the CI/CD workflow. When tests are slow, teams are tempted to only run subsets of tests or no tests at all in order to get a deployment out. Parallelizing tests allows them to run all tests needed to ensure the highest quality.

Another reason to parallelize tests is to remain agile when change is needed. Faster builds and deployments mean teams can make changes more often. In the event a crisis happens and there is a production issue (or some other urgent event), teams can make the necessary changes and get a tested fix out more quickly than they could otherwise.

How to parallelize tests

When we talk about scaling tests by running tests in parallel, we are talking about the test environment (versus the product environment where the product under test lives). A test environment has 2 different ways you can scale: distributing tests and distributing browsers (in the case where you are running UI tests).

Distributing tests is usually managed by one of the following ways:

- Test framework

- Continuous integration tool

- Tools that can automatically parallelize and scale tests

Distributing browsers is usually manage by one of the following:

- Tools that can launch multiple browsers

- Cross browser testing tools

Each of these approaches addresses the tests, test runners, splitting of the tests, and browser/api differently. There are pros and cons to each approach.

Distribution of Tests

Let the test framework parallelize tests. First of all, not all test frameworks support parallelization. Only some frameworks have parallelization built in as an option.

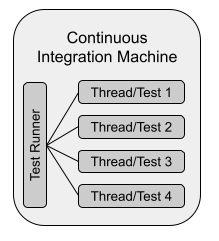

When a testing framework manages the parallelization, a common setup is like the following diagram:

In this approach you tell the test framework how many parallel processes you want. The test runner will spawn a new thread for the number you specify. Test frameworks that support parallelization like this make it fairly easy to add parallel testing into your process. However, beware of common gotchas:

- The machine is limited by processing capacity. After 2-3 threads per CPU you may start seeing degradation of test performance. You may have to upgrade your machines significantly to support this parallelization approach.

- Only certain frameworks support parallelization. See chart below for some examples.

- Make the tests you write thread-safe.

Here is a non-exhaustive list of some test frameworks and their parallelization support:

If a framework doesn’t have parallel test execution support there might be a 3rd party module you can install that supports parallelization. Some of these modules have a fee. Beware though, in many cases these modules create additional strain on your machine resource usage, so a misconfiguration can result in maxing out resources that ultimately slows your builds down or causes failures. A few examples of modules are:

Node:https://www.npmjs.com/package/mocha-parallel-tests

Rails:https://github.com/grosser/parallel_tests, https://github.com/ArturT/knapsack

PHPTest:https://github.com/brianium/paratest

Let the continuous integration tool parallelize your tests. Build servers were not designed to be test environments which is the main reason why it has taken a LONG time for CI tools to even get to where they are today when it comes to parallelizing tests.

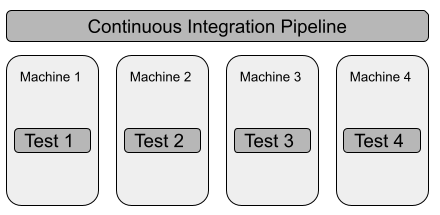

Similar to how each framework works a little differently, continuous integration tools have a few differences in their support of parallelization as well. What is common is the CI pipeline launches tests across multiple agents that you will need to configure. You generally tell the CI how to split the tests by passing in test names or test suite names.

Sounds great right? It is in the sense you can scale out parallelized testing using your build server. However, there are some downsides to this approach that you should be aware of.

- There are unlimited machines available with this approach, but they can get costly and hard to build and maintain. Using build server parallelization increases the number of builds which is expensive and it increases wait time for teams waiting for backed up builds. Read the blog post “Don’t Pay Your CI To Run Tests” to see more details on this.

- Another downside to this approach is lack of consolidated test results. There will be test results from each machine that you will want to consolidate to get an overall picture of quality. This can be a pain to do yourself.

Here are a few continuous integration tools and how they handle parallelizing tests:

One of the biggest concerns when relying on a continuous integration server to handle parallelization is cost. Continuous integration tools weren’t initially built with parallelization in mind. Most tools charge per parallel job which can get costly.

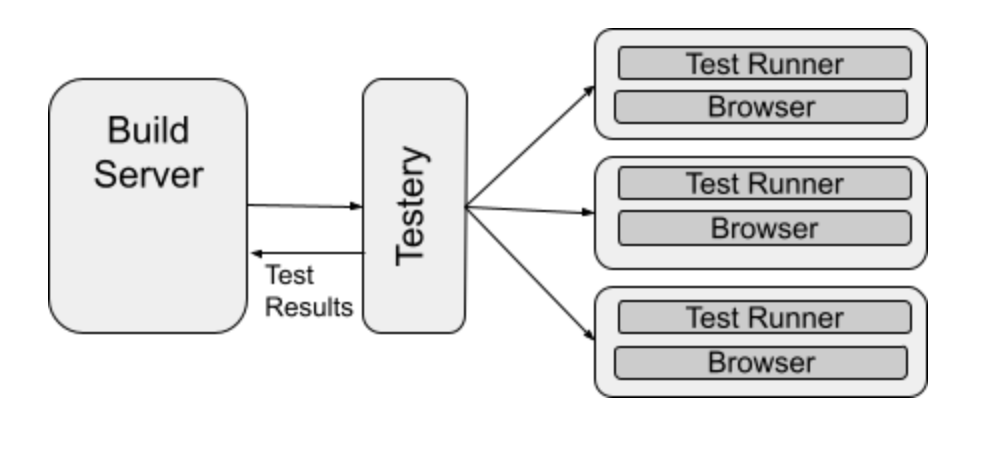

Tools that can automatically parallelize and scale your tests. We only have one tool in this category so far, and that tool is Testery. The reason why this tool is in a category on its own is because it can do the following that other tools can’t do:

- Automatically scale the test runners

- Automatically split the tests

- Manages the test infrastructure for you

- Reduces load on your build server

- Consolidates the test results into single view and sends back test result to CI (or anywhere else you want it)

- Doesn’t have the performance issues (lag) that tools using Selenium Grid have

Distribution of Browser [instructions]

Another way of scaling web based tests is by having one master test execution machine launch multiple Web UI tests that each use a remote Selenium WebDriver. Instead of distributing tests (which is still needed for full parallelization) this approach distributes the browser instructions. This makes it pretty easy to test the same functionality using multiple web browsers.

Cross browser testing tools.

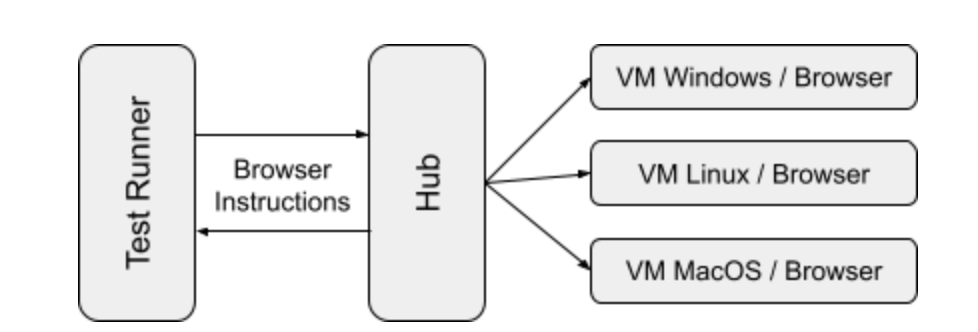

Cross browser testing is a great strategy to make sure that the web sites and web apps you create work across an acceptable number of web browsers. Selenium Grid is a solution that was created to allow for parallelized cross browser testing. Selenium Grid is a smart proxy server that makes it easy to run tests in parallel on multiple machines. This is done by routing commands to remote web browser instances, where one server acts as the hub. Selenium Grid doesn’t actually run the tests so you still need something to run the tests.

Here is how Selenium Grid works:

Using Selenium grid allows you to run parallel tests using different browsers and operating systems. Selenium grid allows you to mimic real user input, support all major languages, and supports many browsers and frameworks among other benefits.

The drawbacks of using a Selenium grid is how the technology is based on http protocol. Tests run on a client machine and sends each browser instruction across the wire causing slowness. Http wasn’t intended for long requests and very often tests have to wait for something to happen. A load balancer may kill requests because its waiting causes issues producing flaky tests.

With Selenium grid you only have access to those platforms, browsers and browser versions on which your nodes are running and eventually the performance of Selenium grid degrades drastically with the larger number of nodes you connect to via hub.

Remember, with all these tools you still need to manage your test runner and ensure parallelization is turned on correctly. Make sure to pay attention to the load these tools are putting on your CI/CD servers. Cross browser testing tools can cause heavier load than intended causing your costs to spike or processes to fail.

Most cross browser tools in the market today are based on and use Selenium Grid. Here are few of the options:

As you can see, there is a lot to consider when wanting to do parallel test execution. If you would need any help or just have questions feel free to reach out to Testery.