Eliminate Flaky Tests

The ultimate goal of test automation is to have quality and confidence in your application and deployments. But what happens when a test doesn’t pass consistently when the code didn’t change? It lowers confidence in the reliability of the test results. Tests that fail randomly like this are called flaky tests.

Flaky tests should be fixed as soon as they show up. If you don’t have time to fix it, schedule it to be fixed soon. Teams that let flaky tests randomly pass or fail have the likelihood of allowing more and more instability into the test suite. Eventually, the tests will be so unreliable that teams won’t depend on them. This is a waste of time and money...not to mention lowered quality of your application.

A good process to follow is:

- Identify flaky tests

- Understand what is causing them to be flaky

- Fix or document flaky test and schedule to be fixed

How to identify flaky tests

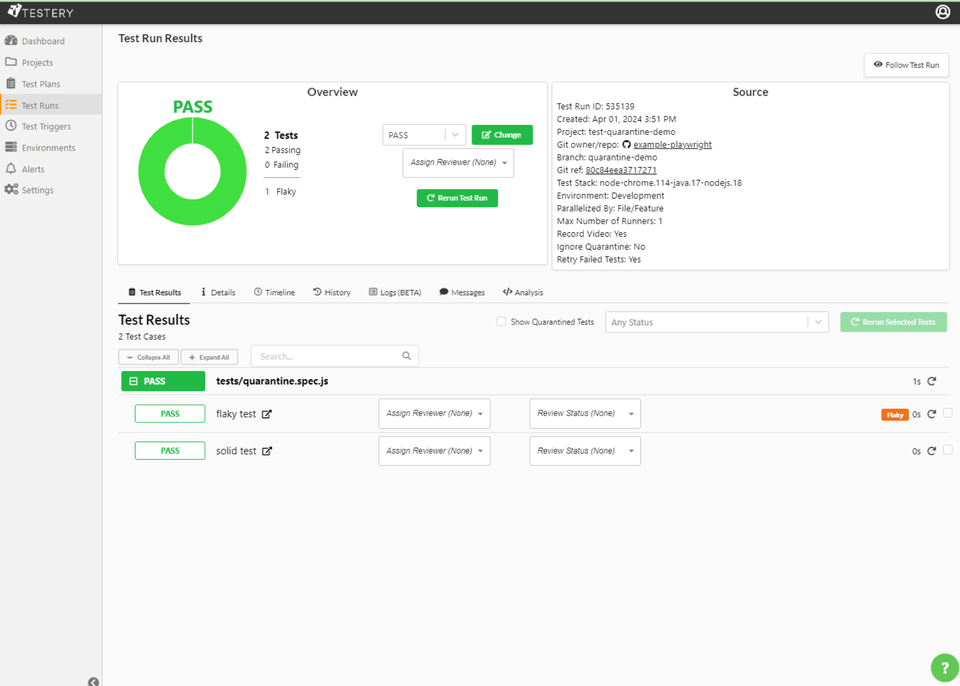

The first thing you have to do to identify flaky tests is run your test suite over and over. You should be doing this as good practice anyway. Once you are running your tests regularly, some testing frameworks have built-in functionality to identify flaky tests. Others have plugins you can use. Testery for instance, recently released flaky test detection functionality. When viewing a test run you can easily identify flaky tests and filter results by only flaky tests. Testery is also working on insights and analytics around flaky tests over time.

What causes flaky tests

The most common things that cause flaky tests are:

- Asynchronous waits/sleeps

- Parallel testing issues

- Using selectors that aren’t resilient to application changes

- Data dependencies

Many people add a wait or a sleep into their tests to ensure the element they are trying to take action on (like a button) has time to render on the page. If you have a system under tests that is slow, a slow connection or a server is not responding then your test may fail. Many times whatever the issue was will clear up the next time your test is run causing the test to pass.

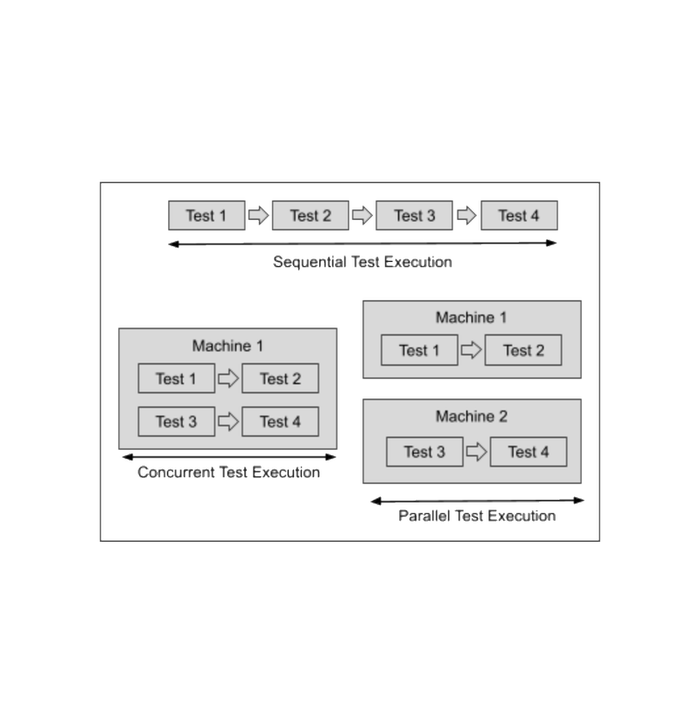

Running tests in parallel speeds up your test runs and is necessary for continuous testing and trying to do things like automatic deployment verification. Tests need to be written taking parallelization into account though. Make sure to follow best practices when running tests in parallel to ensure they execute properly. If not designed properly you can have tests that pass when run sequentially but collide with each other when run in parallel.

Selectors are how you locate elements on a page when writing web tests. Some selectors are more resilient to application changes than others. Auto-generated XPath selectors often look like “//div/div[2]/p/a” and will break if any of the underlying html changes. As a best practice, coordinate with developers to have selectors based on meaningful ids or class names that are consistent with the components on the page (e.g. css selector of “.login-button”).

If tests make assumptions about the state of data that the test depends on, it may fail seemingly random. Tests should set up and teardown data properly so that order and concurrency don’t affect the results.

Fix the flaky tests

When you find a flaky test you need to document it immediately. That could be entering an issue into your bug tracking system or simply using Excel. The idea is to identify them and fix them quickly, so that your team doesn’t start ignoring failing tests.

Regardless of how you identify flaky tests… make sure you stay on top of them and get them fixed before the confidence in your tests and quality decline.

Have flaky tests? Reach out to us and we'd be glad to guide you.